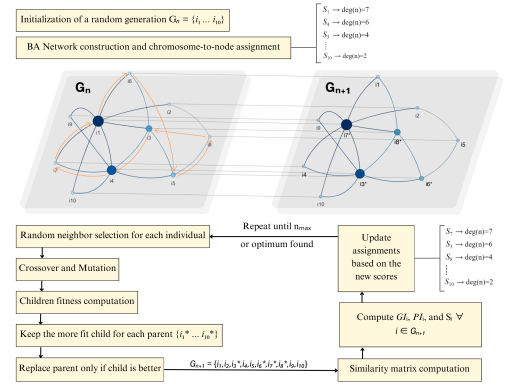

A Scale-Free Network for Modeling a Homogeneous Ensemble

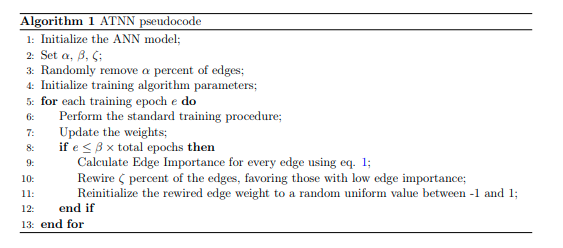

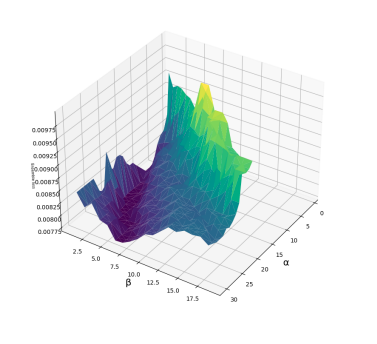

Ensemble learning aims to enhance predictions by aggregating multiple learners through a model ensemble and thus facilitating a more accurate capture of underlying data distribution. This study proposes a scale-free network-based ensemble learning (NEL) algorithm based on neural networks as base learners, allowing for a balance between individual base learner accuracy and diversity. Optimal weights for ensemble integration are calculated based on the centrality of a node in the heterogeneous network. The scale-free topology of NEL inherently allows for ensemble pruning due to its power-law degree distribution. Experimental results derived from averaging the ensemble performance over ten runs show the favorability and robustness of our approach.